Part two discussed how five people with the same experiences might give five different reviews. This part will root these ideas in statistics and show why sorting restaurants, books, and hotels by their overall rating doesn’t yield better decisions. In fact, I began wondering whether a coin toss wouldn’t make a better criterion.

In a perfect world, the ratings would come from like-minded people with the same idea of what every score represents. Even if some people reviewed differently, it would affect most ratings to a similar extent and cancel out on average. Unfortunately, we don’t live there.

It’s not apples to apples

Imagine you crashed a big mac lovers convention and asked some people to rate their favorite McDonald’s. You bet they’d rate much higher than an average person. On the flip side, you could survey culinary critics and, in all likelihood, obtain a lower number. Anything is possible if you ask the right people – it’s called a biased sample.

You may not have realized that, but most online ratings suffer from this problem. Remember McDonald’s with the identical 4.4 score as a three Michelin Stars restaurant Nakamura? They were simply reviewed by two different groups of people.

This isn’t mysterious at all. People typically pay for things they expect to enjoy. The anti-fast food crowd does not write these reviews because they avoid McDonald’s in the first place. That’s an acquisition bias.

A bakery surrounded by skyscrapers is typically seen through the eyes of a corporate employee, while an eatery surrounded by factories will receive the most attention from assembly line workers. Vastly different perspectives. It’s true for pretty much everything we rate: family-friendly hotels, teenage drama blockbusters, and self-help books.

The typical reviewer also changes over time. A newly open restaurant attracts people who don’t mind some risk, while the play-it-safe crowd only joins the party once the numbers improve. The Scary Movie was easy to grasp twenty years ago, but many pop culture references aren’t so obvious anymore. And I don’t even want to get started on cancel culture.

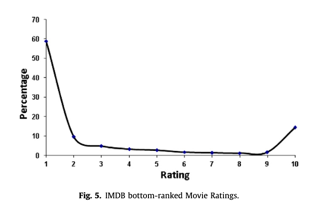

And if we zoom out and look at larger populations, in the western culture we tend to review things that are either particularly good or bad. The average experience often goes forgotten, ignored, and unreviewed. It’s called an underreporting bias and can be seen, for example, on IMDB1:

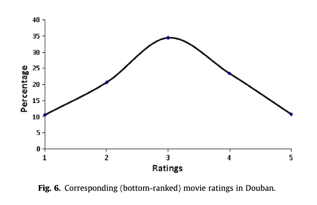

The distribution of scores on Douban, a Chinese movie reviews site, is very different. The users there seem to routinely report even their average experiences1:

Consider that before dismissing a Chinese restaurant popular among the local population just because it scored lower than the pizza place next door.

Rating venues affect ratings

Just like places attract different customers, so do rating websites. Google maps users seem to have different preferences in aggregate than those of TripAdvisor, Trivago, or Yelp. For example, chain restaurants are rated as 2.7 on TripAdvisor and 3.8 on Google Maps4. Then, the prolific and verified reviewers often found on Yelp tend to rate lower than novice and anonymous reviewers – even though the more honest negative feedback tends to come from the latter5.

But there is more. Our choices are also influenced by the design of the review experience. Sometimes it’s obvious, like in the following comparison of Amazon and Goodreads from 20152:

But other times, it is almost imperceivable. Our opinion changes simply by reading other reviews before writing ours3. It goes beyond reading, too. A group of researchers went on internet forums and randomly gave new posts their first upvote or downvote. The results? After a couple of months, the upvoted ones were much more successful. It’s called a herding effect.

Herding is so powerful it can make or break a product, which is why social media hackers rely on it. So did we did with [bench]. Before our Product Hunt launch, we gave demos to several reputable users who then became our first upvoters. This set us up for success, which we doubled down on by offering demos and free plans through Twitter to everyone who upvoted us. In the end, [bench] became the Product of the Day with more than a thousand upvotes.

Finally, many of the factors discussed above fluctuate over time. The design of the rating experience gets updates, the rating websites attract new audiences, and the cultural norms evolve. Every change impacts the scores. And so – how can we tell, what do these numbers actually mean?

And that was part three of the Online Reviews series. In part four I will discuss the conflicts of interest that make it even harder for me to trust these scores. In short, the companies that tally the scores are the same that profit from our purchases. See you next time!

Special thanks to John Nicholas for his suggestions and feedback on this post.

Footnotes

- Koh, Noi & Hu, Nan & Clemons, Eric. (2010). Do Online Reviews Reflect a Product’s True Perceived Quality? – An Investigation of Online Movie Reviews Across Cultures. Electronic Commerce Research and Applications. 9. 374-385. 10.1016/j.elerap.2010.04.001. Direct link.

- One Reason Why Amazon’s Reviews are Higher than Goodreads

- Aral, S. (2014). The problem with online ratings. MIT Sloan Management Review, 55(2), 47. Direct link.

- Li, H., & Hecht, B. (2021). 3 Stars on Yelp, 4 stars on google maps: a cross-platform examination of restaurant ratings. Proceedings of the ACM on Human-Computer Interaction, 4(CSCW3), 1-25. Direct link.

- Wang, Zhongmin (2010). Anonymity, Social Image, and the Competition for Volunteers: A Case Study of the Online Market for Reviews. The B.E. Journal of Economic Analysis & Policy, 10(1). doi:10.2202/1935-1682.2523. Direct link.

Leave a Reply